Sensible Agent is an AI research framework and prototype from Google that chooses both the action an augmented reality (AR) agent should take and the interaction modality to deliver/confirm it, conditioned on real-time multimodal context (e.g., whether hands are busy, ambient noise, social setting). Rather than treating “what to suggest” and “how to ask” as separate problems, it computes them jointly to minimize friction and social awkwardness in the wild.

What interaction failure modes is it targeting?

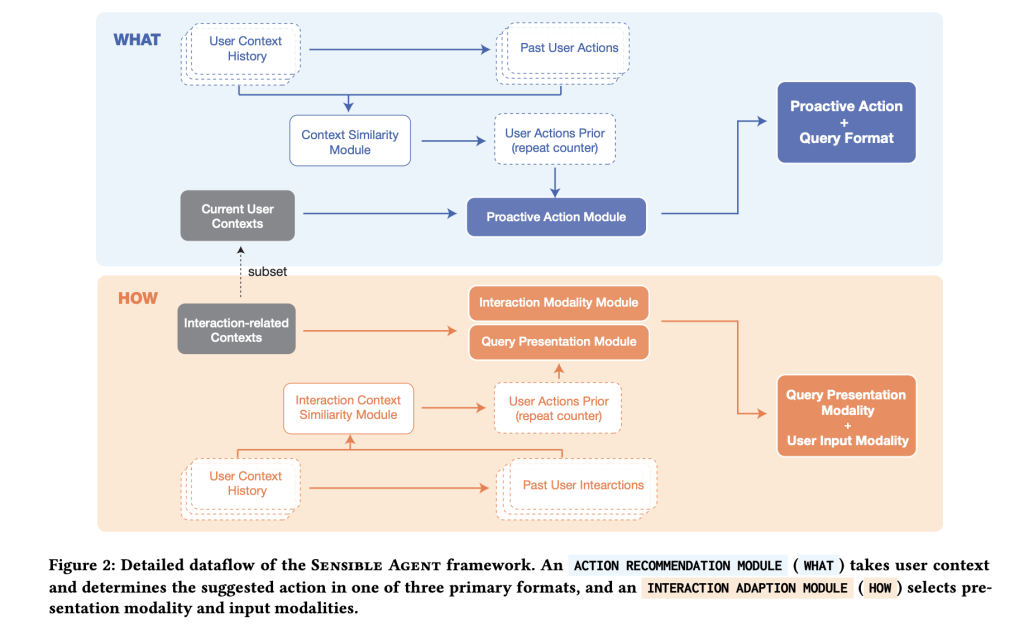

Voice-first prompting is brittle: it’s slow under time pressure, unusable with busy hands/eyes, and awkward in public. Sensible Agent’s core bet is that a high-quality suggestion delivered through the wrong channel is effectively noise. The framework explicitly models the joint decision of (a) what the agent proposes (recommend/guide/remind/automate) and (b) how it’s presented and confirmed (visual, audio, or both; inputs via head nod/shake/tilt, gaze dwell, finger poses, short-vocabulary speech, or non-lexical conversational sounds). By binding content selection to modality feasibility and social acceptability, the system aims to lower perceived effort while preserving utility.

How is the system architected at runtime?

A prototype on an Android-class XR headset implements a pipeline with three main stages. First, context parsing fuses egocentric imagery (vision-language inference for scene/activity/familiarity) with an ambient audio classifier (YAMNet) to detect conditions like noise or conversation. Second, a proactive query generator prompts a large multimodal model with few-shot exemplars to select the action, query structure (binary / multi-choice / icon-cue), and presentation modality. Third, the interaction layer enables only those input methods compatible with the sensed I/O availability, e.g., head nod for “yes” when whispering isn’t acceptable, or gaze dwell when hands are occupied.

Where do the few-shot policies come from—designer instinct or data?

The team seeded the policy space with two studies: an expert workshop (n=12) to enumerate when proactive help is useful and which micro-inputs are socially acceptable; and a context mapping study (n=40; 960 entries) across everyday scenarios (e.g., gym, grocery, museum, commuting, cooking) where participants specified desired agent actions and chose a preferred query type and modality given the context. These mappings ground the few-shot exemplars used at runtime, shifting the choice of “what+how” from ad-hoc heuristics to data-derived patterns (e.g., multi-choice in unfamiliar environments, binary under time pressure, icon + visual in socially sensitive settings).

What concrete interaction techniques does the prototype support?

For binary confirmations, the system recognizes head nod/shake; for multi-choice, a head-tilt scheme maps left/right/back to options 1/2/3. Finger-pose gestures support numeric selection and thumbs up/down; gaze dwell triggers visual buttons where raycast pointing would be fussy; short-vocabulary speech (e.g., “yes,” “no,” “one,” “two,” “three”) provides a minimal dictation path; and non-lexical conversational sounds (“mm-hm”) cover noisy or whisper-only contexts. Crucially, the pipeline only offers modalities that are feasible under current constraints (e.g., suppress audio prompts in quiet spaces; avoid gaze dwell if the user isn’t looking at the HUD).

Does the joint decision actually reduce interaction cost?

A preliminary within-subjects user study (n=10) comparing the framework to a voice-prompt baseline across AR and 360° VR reported lower perceived interaction effort and lower intrusiveness while maintaining usability and preference. This is a small sample typical of early HCI validation; it’s directional evidence rather than product-grade proof, but it aligns with the thesis that coupling intent and modality reduces overhead.

How does the audio side work, and why YAMNet?

YAMNet is a lightweight, MobileNet-v1–based audio event classifier trained on Google’s AudioSet, predicting 521 classes. In this context it’s a practical choice to detect rough ambient conditions—speech presence, music, crowd noise—fast enough to gate audio prompts or to bias toward visual/gesture interaction when speech would be awkward or unreliable. The model’s ubiquity in TensorFlow Hub and Edge guides makes it straightforward to deploy on device.

How can you integrate it into an existing AR or mobile assistant stack?

A minimal adoption plan looks like this: (1) instrument a lightweight context parser (VLM on egocentric frames + ambient audio tags) to produce a compact state; (2) build a few-shot table of context→(action, query type, modality) mappings from internal pilots or user studies; (3) prompt an LMM to emit both the “what” and the “how” at once; (4) expose only feasible input methods per state and keep confirmations binary by default; (5) log choices and outcomes for offline policy learning. The Sensible Agent artifacts show this is feasible in WebXR/Chrome on Android-class hardware, so migrating to a native HMD runtime or even a phone-based HUD is mostly an engineering exercise.

Summary

Sensible Agent operationalizes proactive AR as a coupled policy problem—selecting the action and the interaction modality in a single, context-conditioned decision—and validates the approach with a working WebXR prototype and small-N user study showing lower perceived interaction effort relative to a voice baseline. The framework’s contribution is not a product but a reproducible recipe: a dataset of context→(what/how) mappings, few-shot prompts to bind them at runtime, and low-effort input primitives that respect social and I/O constraints.

Check out the Paper and Technical details. Feel free to check out our GitHub Page for Tutorials, Codes and Notebooks. Also, feel free to follow us on Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to our Newsletter.

Michal Sutter is a data science professional with a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.