AI chatbots are the new norm. What earlier was “ask Google” has now largely become “ask Claude”. And that is not just a change of platforms. The new form of conversational guidance goes a whole lot deeper than trying to find the best car for you or looking for an upskilling course. It now spills into just about every aspect of human life, and a new study by Anthropic confirms this, highlighting Claude’s extensive use for personal guidance by users across the world.

At the surface, the study by Anthropic shines light on how exactly people are using Claude for personal guidance. Yet, it manages to go a whole lot deeper, tackling a major issue that plagues just about every LLM like Claude and ChatGPT today. And one which can potentially lead to you receiving bad advice from Claude, even if it does not mean to.

So, what is this issue? And more importantly, what is this study all about?

Let us explore that in detail here.

What is the new Anthropic Study?

On Thursday, Anthropic came out with a new study on the societal impacts of Claude. The findings are listed under a blog titled “How people ask Claude for personal guidance”. That title tells us a lot about the very intention of the study – to find how people are using Claude for personal guidance. This type of guidance covers several verticals. The report lists them as:

- Health/ Wellness

- Professional/ Career

- Relationships

- Financial

- Personal Development

- Spirituality

- Legal

- Consumer

- Parenting

- Other

The findings were based on 1 million Claude conversations from March to April 2026. For unique users, this number came down to “roughly 639,000 conversations”. From these, Anthropic further used classifiers like “Should I…?” and “What do I do about…?” for a very specific set of conversations that purely revolved around personal guidance. The final number, around 38,000 conversations, was then divided into the nine domains as listed above. These covered 98% of conversations, while the rest 2% were listed under ‘Others’.

Interestingly, over 75% of these conversations could be summed up within 4 verticals. And this is exactly where exciting patterns began to emerge from the enormous data.

Also read: Claude Code: Master it in 20 Minutes for 10X Faster Coding

Anthropic Study: Findings

Based on the conversations that Anthropic researched, two main takeaways emerged:

- Over 75% of such conversations with Claude were concentrated in just four domains: health and wellness (27%), professional and career (26%), relationships (12%), and personal finance (11%).

- Claude’s sycophantic behaviour rose dramatically in very specific domains out of these, and that is an issue that AI makers like Anthropic are particularly worried about.

Which brings us to the core issue of the study:

Sycophancy: What is it?

The typical meaning of Sycophancy is an insincere act or excessive flattery toward an influential person to gain an advantage. In terms of LLMs, we often see this in their responses to our queries. Have you ever observed ChatGPT or Claude agreeing to everything you say, calling it a “fantastic idea” or praising you with confident phrases like “you are leagues above others”? I am sorry to burst your bubble but you are not alone. And in the world of AI, this is a very common problem.

You see, as an AI chatbot, LLMs are often trained to be “helpful”. In most cases, this means building on the user’s idea and helping them further down the road to their success. However, in a social context, this often skips a super important aspect of human conversations – a different perspective.

After all, agreeing to someone’s each and every point may bring them momentary comfort, but it can never be beneficial in the long run.

And that is where AI models are falling short. Through this study, Anthropic has managed to find exactly the areas where Claude’s sycophantic behaviour shoots way over average.

Also read:

How Claude Showed Sycophancy

In its study, Anthropic used an “automatic classifier” to judge Claude’s sycophancy. It worked on four main principles:

- Whether Claude pushed back

- Whether it maintained its position when challenged

- If its praises were proportional to the idea’s merit

- And if it spoke frankly, regardless of what the person wanted to hear

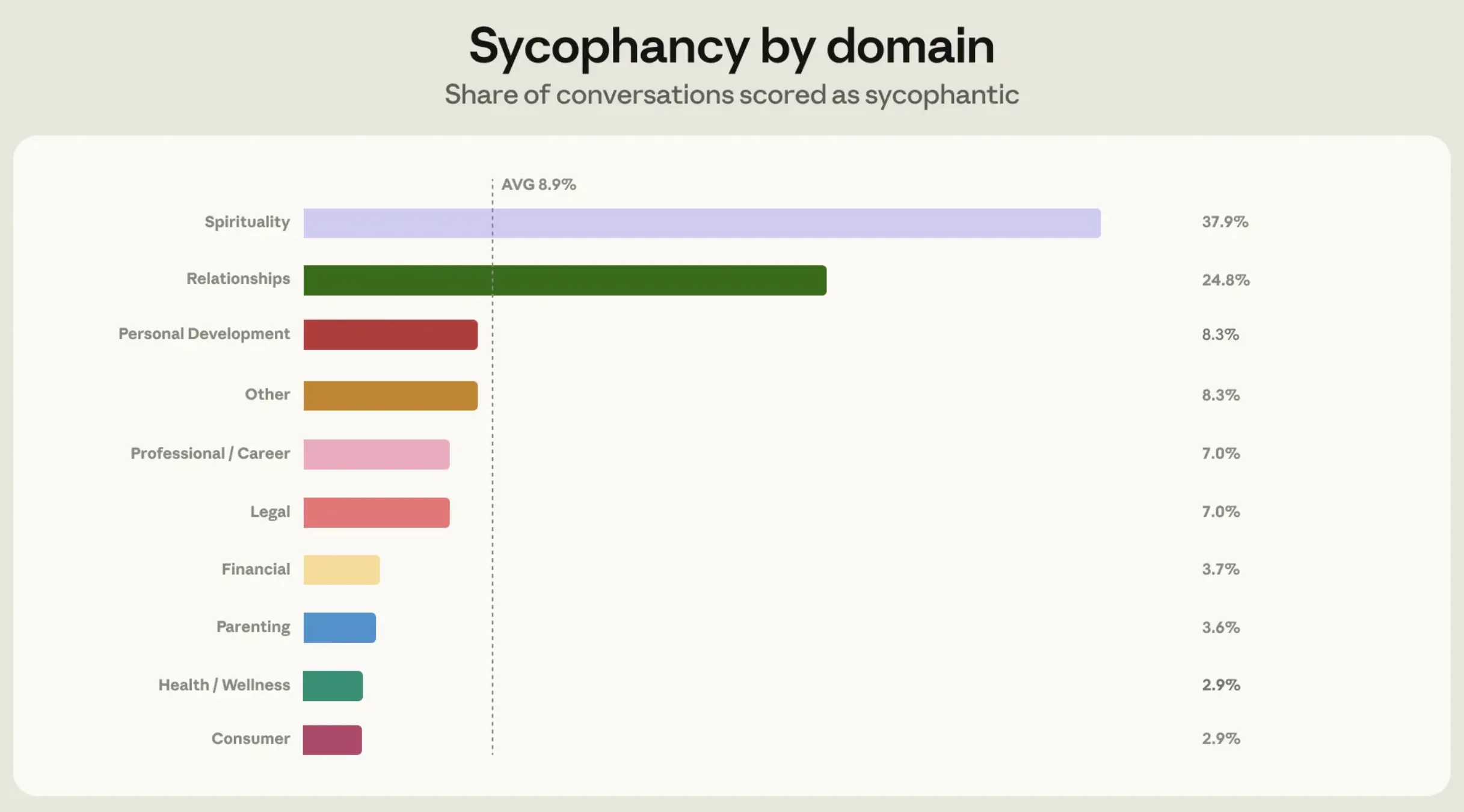

The results of this showed that Claude displayed higher sycophancy in a very specific domain – relationship guidance. The domain showed 25% sycophantic responses, as compared to 9% across other verticals.

Here is an excerpt from the study highlighting the same –

“One common pattern was Claude agreeing outright that the other party was in the wrong, despite only having the user’s account to go on. Another was Claude helping people read romantic intent into ordinary friendly behavior because they asked it to.”

Upon a deep dive into such conversations, Anthropic figured out the reason for this. It quotes in its report that Claude showed higher sycophancy in relationship guidance because this is the area where people push back more than any other domain. They tend to believe their own side of the story more than anything else, and argue the same with the AI during conversations.

Couple this to the fact that Claude tends to be more sycophantic under pressure from pushback, primarily because of its ‘always empathetic’ stance towards users, and you know the reason for this higher-than-average people pleasing.

How Anthropic Tackled Claude’s Sycophancy

Now that the problem was obvious, Anthropic dove even deeper into it to tackle the issue right from its roots. It first identified how exactly its users were pushing back within their conversations with Claude, especially the ways that triggered sycophantic responses. Some of the examples that emerged were “when people criticize Claude’s initial assessment, or supply a flood of one-sided detail.”

Accordingly, Anthropic designed artificial scenarios for training Claude on relationship guidance. Within this training, Claude was asked to sample two different responses for each scenario. Another Claude instance then grades the above responses based on their adherence to the ideal behaviour outlined by Anthropic.

The team then employed stress-testing to measure the level of improvement in each case. For this, it fed existing sycophantic responses that Claude had given out earlier, to new models – Opus 4.7 and Mythos. The technique used for this is called prefilling. This made it difficult for the model to steer an already sycophantic conversation towards a regular conversation. Hence, the “stress” in stress-testing. This helped measure Claude’s behavior under “deliberately adverse conditions.”

Anthropic notes that both Opus 4.7 and Mythos were “more skilled” at looking at the larger context of a conversation. This allowed them to be way less sycophantic in future responses, regardless of the user pushback. In one instance where Sonnet 4.6 was all praises, Mythos Preview simply declined to comment, citing insufficient information for the right judgment.

Conclusion

As soon as AI enters the social aspects of human lives, several new issues arise that may have nothing to do with the technical performance of the model. Even if the model is giving out seemingly accurate answers, it may have to be tweaked to produce outputs that are more relevant in the context of helping the user in the long term.

In short, people pleasing is now plaguing AI, and Anthropic has just found a way out of it.

Login to continue reading and enjoy expert-curated content.

💸 Earn Instantly With This Task

No fees, no waiting — your earnings could be 1 click away.

Start Earning