Instruction-based image editing models are impressive at following prompts. But when edits involve physical interactions, they often fail to respect real-world laws. In their paper “From Statics to Dynamics: Physics-Aware Image Editing with Latent Transition Priors,” the authors introduce PhysicEdit, a framework that treats image editing as a physical state transition rather than a static transformation between two images. This shift improves realism in physics-heavy scenarios.

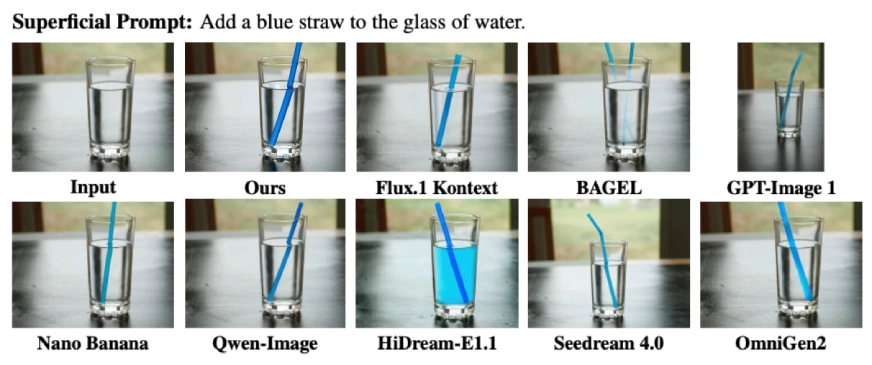

AI Image Generation Failures

You generate a room with a lamp and ask the model to turn it off. The lamp switches off, but the lighting in the room barely changes. Shadows remain inconsistent. The instruction is followed, but illumination physics is ignored.

Now insert a straw into a glass of water. The straw appears in the glass but stays perfectly straight instead of bending due to refraction. The edit looks correct at first glance, yet it violates optical physics. These are exactly the failures PhysicEdit aims to fix.

Also Read: Top 7 AI Image Generators to Try in 2026

The Problem with Current Image Editing Models

Most instruction-based editing models follow a straightforward setup.

- You provide a source image.

- You provide an editing instruction.

- The model generates a modified image.

This works well for semantic edits like:

- Change the shirt color to blue

- Replace the dog with a cat

- Remove the chair

However, this setup treats editing as a static mapping between two images. It does not model the process that leads from the initial state to the final state.

This becomes a problem in physics-heavy scenarios such as:

- Insert a straw into a glass of water

- Let the ball fall onto the cushion

- Turn off the lamp

- Freeze the soda can

These edits require understanding how physical laws affect the scene over time. Without modeling that transition, the system often produces results that look plausible at first glance but break under closer inspection.

From Static Mapping to Physical State Transitions

PhysicEdit proposes a different formulation.

Instead of directly predicting the final image from the source image and instruction, it treats the instruction as a physical trigger. The source image represents the initial physical state of the scene. The final image represents the outcome after the scene evolves under physical laws.

In other words, editing is treated as a state evolution problem rather than a direct transformation.

This distinction matters.

Traditional editing datasets only provide the starting image and the final image. The intermediate steps are missing. As a result, the model learns what the output should look like, but not how the scene should physically evolve to reach that state.

PhysicEdit addresses this limitation by learning from videos.

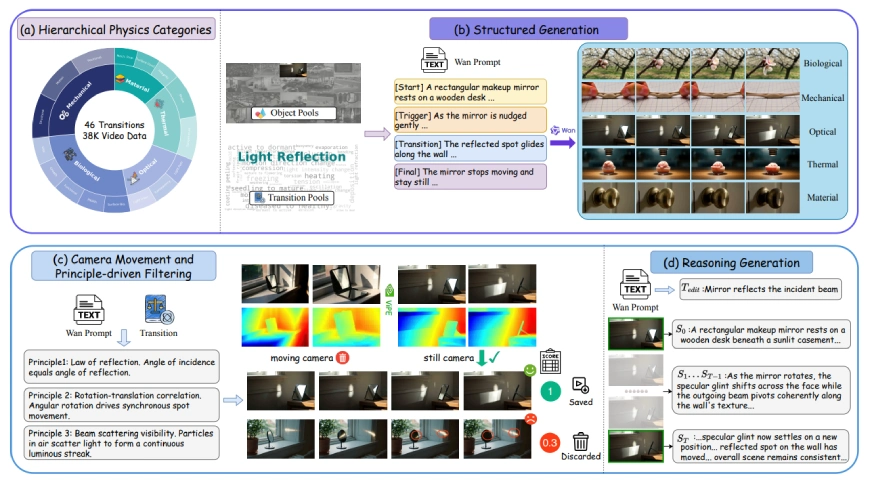

Introducing PhysicTran38K

To train a physics-aware editing model, the authors created a new dataset called PhysicTran38K. It contains approximately 38,000 video-instruction pairs focused specifically on physical transitions. The dataset covers five major domains:

- Mechanical

- Optical

- Biological

- Material

- Thermal

Across these domains, it defines 16 sub-domains and 46 transition types. Examples include:

- Light reflection

- Refraction

- Deformation

- Freezing

- Melting

- Germination

- Hardening

- Collapse

Each video captures a full transition from an initial state to a final state, including the intermediate steps. The construction process is structured and filtered carefully:

- Videos are generated using prompts that explicitly define start state, trigger event, transition, and final state.

- Camera motion is filtered out so that pixel changes reflect physical evolution rather than viewpoint shifts.

- Physical principles are automatically verified to ensure consistency.

- Only transitions that pass these checks are retained.

This results in high-quality supervision for learning realistic physical dynamics.

How PhysicEdit Works?

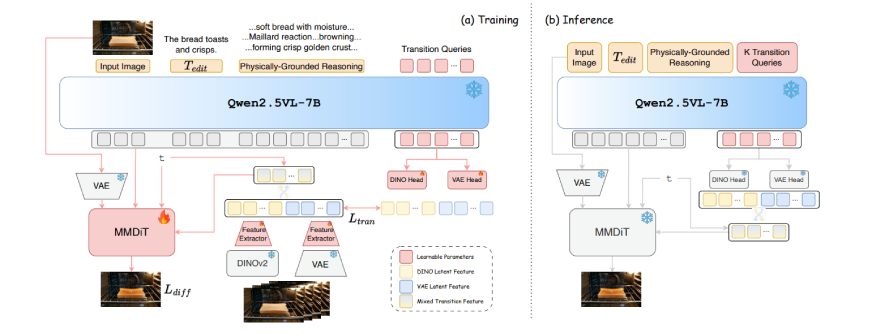

PhysicEdit builds on top of Qwen-Image-Edit, a diffusion-based editing backbone. To incorporate physics, it introduces a dual-thinking mechanism with two components:

- Physically grounded reasoning

- Implicit visual thinking

These two streams complement each other and address different aspects of physical realism.

Dual-Thinking: Reasoning and Visual Transition Priors

Physically Grounded Reasoning

PhysicEdit uses a frozen Qwen2.5-VL-7B model to generate structured reasoning before image generation begins.

Given the source image and instruction, it produces:

- The physical laws involved

- Constraints that must be respected

- A description of how the change should unfold

This reasoning trace becomes part of the conditioning context for the diffusion model. It ensures the edit respects causality and domain knowledge.

The reasoning model remains frozen during training, which helps preserve its general knowledge.

Implicit Visual Thinking

Text reasoning alone cannot capture fine-grained visual effects such as:

- Subtle deformation

- Texture transitions during melting

- Light scattering

To handle this, PhysicEdit introduces learnable transition queries.

These queries are trained using intermediate frames from the PhysicTran38K videos. Two encoders supervise them:

- DINOv2 features for structural information

- VAE features for texture-level detail

During training, the model aligns the transition queries with visual features extracted from intermediate states. At inference time, no intermediate frames are available. Instead, the learned transition queries act as distilled transition priors, guiding the model toward physically plausible outputs.

Why Video Matters for Learning Physics?

With image-only supervision, the model sees only the initial and final states. With video supervision, it sees how the scene evolves step by step. This additional information constrains the learning process. It teaches the model not just what the outcome should look like, but how it should develop over time. PhysicEdit compresses this dynamic information into latent representations so that editing remains efficient and single-image based during inference.

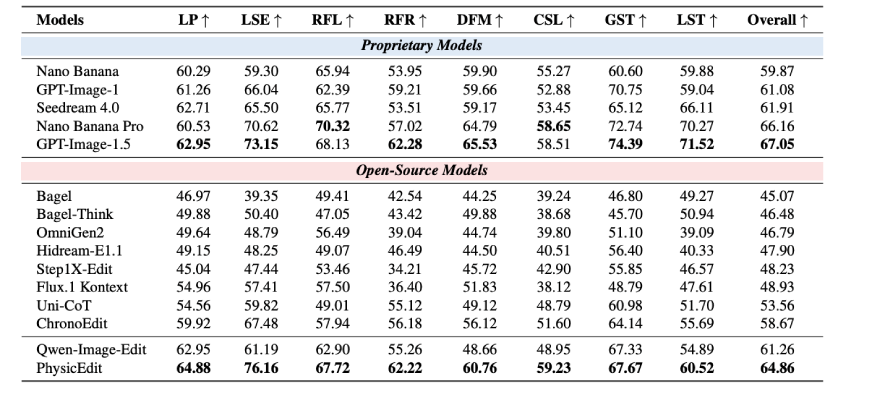

Results on PICABench and KRISBench

PhysicEdit was evaluated on two benchmarks:

PICABench Results

PICABench focuses on physical realism, including optics, mechanics, and state transitions. Compared to its backbone model, PhysicEdit improves overall physical realism by approximately 5.9%. The largest gains appear in categories requiring implicit dynamics, including:

- Light source effects

- Deformation

- Causality

- Refraction

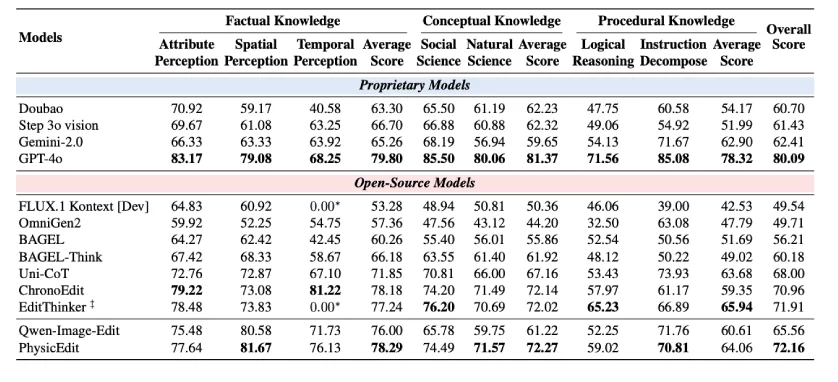

KRISBench Results

On KRISBench, which evaluates knowledge-grounded editing, PhysicEdit improves overall performance by around 10.1%. Improvements are particularly noticeable in:

- Temporal perception

- Natural science reasoning

These results suggest that modeling editing as state transitions improves both visual fidelity and physics-related reasoning.

Why This Matters for AI Systems?

As generative models become more integrated into creative tools, augmented reality systems, and multimodal agents, physical plausibility becomes increasingly important. Visually inconsistent lighting, unrealistic deformation, or broken causality can reduce reliability and trust.

PhysicEdit demonstrates that:

- Physics can be learned effectively from video data

- Transition priors can be distilled into compact latent representations

- Text reasoning and visual supervision can work together

This represents a meaningful step toward more world-consistent generative models.

Our Top Articles on Image Editing Models:

Conclusion

Most image editing models treat editing as a static transformation problem. PhysicEdit reframes it as a physical state transition problem. By combining video-based supervision, physically grounded reasoning, and learned transition priors, it produces edits that are not only semantically correct but physically plausible. The dataset, code, and checkpoints are open-sourced, making it accessible for researchers and engineers who want to build more realistic editing systems. As generative AI continues to evolve, incorporating physical consistency may move from being a research innovation to a standard requirement.

Note: The source of all the images and information in the blog is this research paper.

Login to continue reading and enjoy expert-curated content.

💸 Earn Instantly With This Task

No fees, no waiting — your earnings could be 1 click away.

Start Earning